Thermometers

Expanded

Among the first things noted about illness, in every culture, is the concept of fever. This is, in fact, one of the key-words I remember memorizing early on in medical school that indicate inflammation or illness: rubor (redness), calor (heat), tumor (swelling), dolor (pain). Therefore, it has always been important that a physician is able to accurately determine the extent of temperature change. This has not always been fully objective – for centuries, the standard was to simply guess which degree of hot or cold (1st-4th degree in either direction) as compared to boiling water (4th degree hot) or ice (4th degree cold).

This isn’t to say that tools for measuring temperature didn’t exist – concepts of air-thermometers certainly did, relying entirely on the expansion of air as it heats. One such example was put forth by Philo Mechanicus (240-200 BCE). Furthermore, many “miraculous devices” created by Hero of Alexandria (10-70 CE) rely on the concept that heated air will push a liquid up through a tube—for example, to open a temple door or activate a fountain only when the weather is hot. These tools were not, per se, designed to take a measurement of temperature. Galileo (1610) is credited with the invention of a similar device, one which used alcohol instead of water, and it would be his students who primarily are credited with the idea to use these devices to measure the temperature of the human body. Sanctorius, a physician and physiologist (and importantly, peer of Galileo), created a mechanism to rudimentarily measure the temperature of the body. Simply, air particles expand as they heat up. If the air is trapped in a tube that is closed at one end and that tube is then inserted into a body of liquid, heating the top of the tube (say, by putting the end in one’s mouth) will push liquid out, creating a vacuum. When the mouth is removed, the air shrinks again, drawing up the amount of liquid needed to fill whatever vacuum was created by the heated air. From whatever level the liquid rises to, one can determine vaguely the person’s temperature, although not with particular accuracy or precision, given that the surrounding environment would also affect the entire mechanism.

The solution to this problem is the creation of an enclosed, liquid-based thermometer. There are a few contenders, but it seems that around the mid 1600s many minds were meeting to develop the first liquid thermometers. Ferdinand II, after the death of Galileo (after years of Galileo’s house arrest for heresy) set up a formal academy for the advancement of scientific knowledge and experiments. He, along with many of Galileo’s students, would work together on the advancements that allowed the creation of the liquid thermometer. In these, instead of air being expanded and then liquid filling in the gaps, the liquid itself does the expansion. This allows for a faster measurement, and also ensures that the barometric pressure of the environment does not change the measurements of the tool. Today, companies still make “Galileo Thermometers” that were first invented by Ferdinand II around 1641.

Although there was some early interest in mercury as a possible filler, the consensus in the mid 1600s was to use alcohol. Alcohol was extremely sensitive to changes in temperature, not to mention economical. Mercury, in contrast, is more sensitive to temperature change than water, but less so than alcohol. Still, its predictable expansion and the fact that it remains liquid through a high range (−35°F to 673°F) meant it would eventually be adopted by the scientific community. This shift began when Edmund Halley adopted mercury for his thermometers in the mid 1600s.

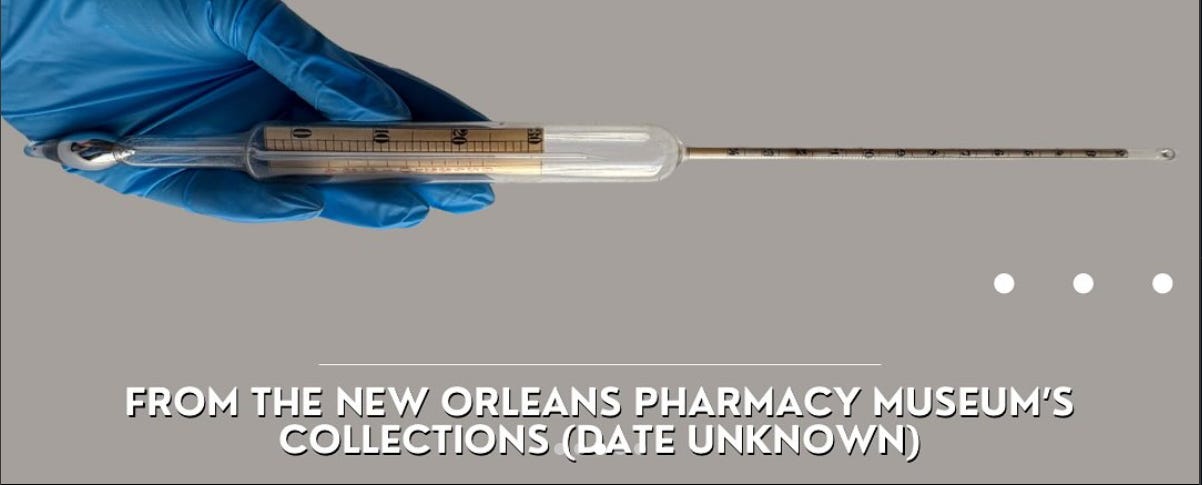

Daniel Gabriel Fahrenheit would later use the temperature gage he created on his mercury thermometers in 1714, which would then become the standard. Fahrenheit even developed a method to purify the mercury so that it would not stick to the walls of the glass, making it much easier to read and making each thermometer he created reliable and accurate to his scale.

Mercury thermometers remained the standard for daily and medicinal use and would be manufactured en masse by Sir Thomas Clifford Allbutt starting in 1867. However, mercury is neurotoxic and can be dangerous, especially when ingested. While safe inside the glass, breaking a mercury thermometer posed particular risk around children. With modern digital thermometers, states began banning mercury for medical use in 2001, signaling the start of the end. In 2011, NIST (the National Institute of Standards and Technology) decided it would no longer calibrate mercury thermometers, a necessary process to ensure each instrument yields the same temperature. Since NIST had occupied that role since 1901, their decision effectively marked the end of the mercury thermometer in the USA.